In many performance discussions, automation is treated as the hero. How do we save time? How do we reduce manual optimisation? How do we let algorithms scale faster?

Those are valid questions. But they distract from a more important one: On which data foundation is your automation actually running?

The hidden problem with platform-native signals

Your bidding algorithms are only as good as the conversion signal you give them. In most setups, that signal comes from one of two places, and yet, in both cases, the underlying conversion logic is the same.

Whether you’re optimising directly within a platform’s native interface or consolidating numbers into a reporting layer or dashboard (read more about owning vs. organising your data here): the conversion logic is still platform-attributed. Aggregated, modelled, and pre-processed by the same media owners who benefit from your spend. Google tells you what Google contributed, Meta tells you what Meta contributed and your bidding systems learn accordingly.

When that happens, your algorithms are not scaling business impact. They’re scaling platform credit.

This distinction matters more than most teams realise. Over time, optimisation signals trained on platform-reported conversions will systematically over-invest in channels that are good at claiming credit, not necessarily the ones that are good at driving incremental demand. The bias compounds quietly, cycle after cycle, until budgets drift far from where the real value is.

The real shift: owning your optimisation signal

The real shift happens when conversion contribution is calculated on an independent measurement layer, based on cross-channel customer journeys and data you own, not data platforms pre-process for you.

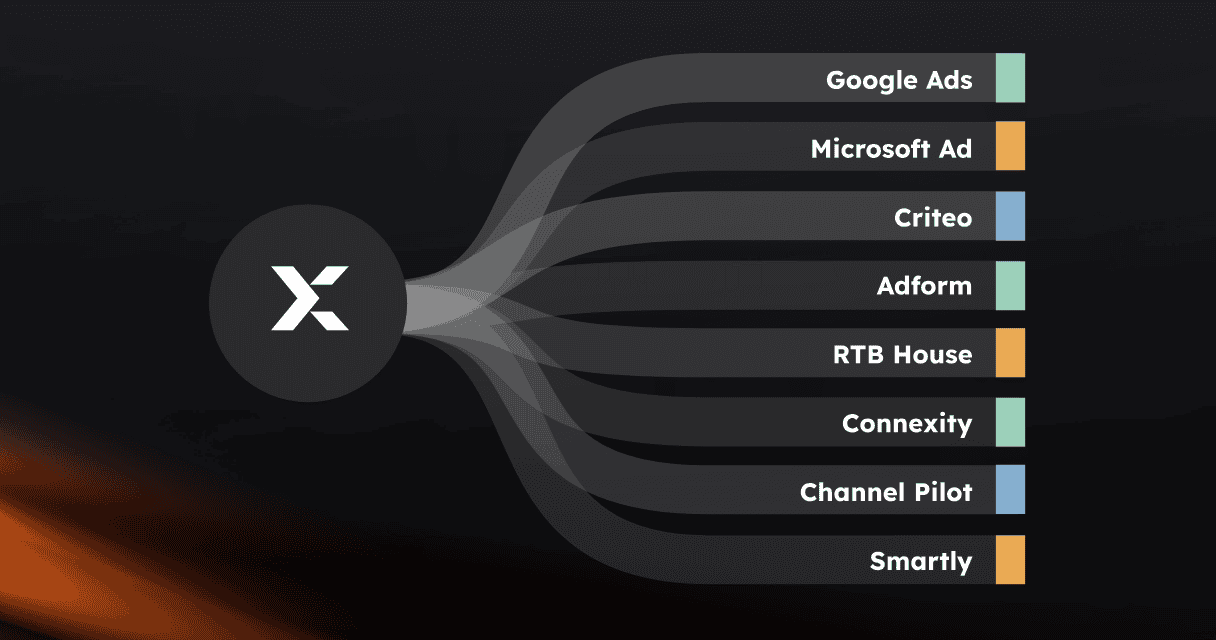

At Exactag, attributed conversion values are calculated on a neutral, journey-based foundation. Every touchpoint across the full customer journey (search, social, display, programmatic, and more) is evaluated for its actual incremental contribution to conversion.

With Attribution Push (read more about it here), those independently attributed values are automatically fed back into search, social, and programmatic bidding systems on a daily basis. The algorithm still does what it’s designed to do: learn and optimise at scale. The difference is what it’s learning from. Instead of platform credit, it learns business impact.

“Exactag doesn’t just analyse data. We collect it ourselves, ensuring a neutral, complete, and granular foundation that no platform or analytics tool can provide.”

— Katharina Thürer, Exactag

The questions worth asking

Before automating more, it’s worth pausing on four questions:

- What conversion data are we currently feeding into our bidding systems: platform conversions, or our own attributed values?

- Who defines the optimisation metric: us, or the platform?

- Have we ever tested platform-native optimisation against independently attributed optimisation?

- What is the long-term cost of training algorithms on biased signals?

That last question is easy to underestimate. The cost of a single misconfigured campaign is visible and recoverable. The cost of months or years of automation learning from systematically biased data is structural. It shapes budget allocation, channel mix, and strategic assumptions in ways that are much harder to unwind.

Automation learns from the signal you provide. If that signal reflects what platforms want you to believe, automation will optimise for that. If the signal reflects what actually drives business outcomes, automation will optimise for that instead.

Smarter signal, smarter results: Bett1 Case

When Bett1 shifted to Attribution Push, SEA costs dropped by 43%, because they changed what their bidding strategy was learning from. Read the full story here.

Curious what would change if your bidding systems learned from your data instead of platform logic? Happy to exchange thoughts.